-

Getting Started with NetScaler

-

Deploy a NetScaler VPX instance

-

Optimize NetScaler VPX performance on VMware ESX, Linux KVM, and Citrix Hypervisors

-

Apply NetScaler VPX configurations at the first boot of the NetScaler appliance in cloud

-

Configure simultaneous multithreading for NetScaler VPX on public clouds

-

Install a NetScaler VPX instance on Microsoft Hyper-V servers

-

Install a NetScaler VPX instance on Linux-KVM platform

-

Prerequisites for installing NetScaler VPX virtual appliances on Linux-KVM platform

-

Provisioning the NetScaler virtual appliance by using OpenStack

-

Provisioning the NetScaler virtual appliance by using the Virtual Machine Manager

-

Configuring NetScaler virtual appliances to use SR-IOV network interface

-

Configure a NetScaler VPX on KVM hypervisor to use Intel QAT for SSL acceleration in SR-IOV mode

-

Configuring NetScaler virtual appliances to use PCI Passthrough network interface

-

Provisioning the NetScaler virtual appliance by using the virsh Program

-

Provisioning the NetScaler virtual appliance with SR-IOV on OpenStack

-

Configuring a NetScaler VPX instance on KVM to use OVS DPDK-Based host interfaces

-

-

Deploy a NetScaler VPX instance on AWS

-

Deploy a VPX high-availability pair with elastic IP addresses across different AWS zones

-

Deploy a VPX high-availability pair with private IP addresses across different AWS zones

-

Protect AWS API Gateway using the NetScaler Web Application Firewall

-

Configure a NetScaler VPX instance to use SR-IOV network interface

-

Configure a NetScaler VPX instance to use Enhanced Networking with AWS ENA

-

Deploy a NetScaler VPX instance on Microsoft Azure

-

Network architecture for NetScaler VPX instances on Microsoft Azure

-

Configure multiple IP addresses for a NetScaler VPX standalone instance

-

Configure a high-availability setup with multiple IP addresses and NICs

-

Configure a high-availability setup with multiple IP addresses and NICs by using PowerShell commands

-

Deploy a NetScaler high-availability pair on Azure with ALB in the floating IP-disabled mode

-

Configure a NetScaler VPX instance to use Azure accelerated networking

-

Configure HA-INC nodes by using the NetScaler high availability template with Azure ILB

-

Configure a high-availability setup with Azure external and internal load balancers simultaneously

-

Configure a NetScaler VPX standalone instance on Azure VMware solution

-

Configure a NetScaler VPX high availability setup on Azure VMware solution

-

Configure address pools (IIP) for a NetScaler Gateway appliance

-

Deploy a NetScaler VPX instance on Google Cloud Platform

-

Deploy a VPX high-availability pair on Google Cloud Platform

-

Deploy a VPX high-availability pair with external static IP address on Google Cloud Platform

-

Deploy a single NIC VPX high-availability pair with private IP address on Google Cloud Platform

-

Deploy a VPX high-availability pair with private IP addresses on Google Cloud Platform

-

Install a NetScaler VPX instance on Google Cloud VMware Engine

-

-

Solutions for Telecom Service Providers

-

Load Balance Control-Plane Traffic that is based on Diameter, SIP, and SMPP Protocols

-

Provide Subscriber Load Distribution Using GSLB Across Core-Networks of a Telecom Service Provider

-

Authentication, authorization, and auditing application traffic

-

Basic components of authentication, authorization, and auditing configuration

-

Web Application Firewall protection for VPN virtual servers and authentication virtual servers

-

On-premises NetScaler Gateway as an identity provider to Citrix Cloud™

-

Authentication, authorization, and auditing configuration for commonly used protocols

-

Troubleshoot authentication and authorization related issues

-

-

-

-

-

-

Configure DNS resource records

-

Configure NetScaler as a non-validating security aware stub-resolver

-

Jumbo frames support for DNS to handle responses of large sizes

-

Caching of EDNS0 client subnet data when the NetScaler appliance is in proxy mode

-

Use case - configure the automatic DNSSEC key management feature

-

Use Case - configure the automatic DNSSEC key management on GSLB deployment

-

-

-

Source IP address whitelisting for GSLB communication channels

-

Use case: Deployment of domain name based autoscale service group

-

Use case: Deployment of IP address based autoscale service group

-

-

Persistence and persistent connections

-

Advanced load balancing settings

-

Gradually stepping up the load on a new service with virtual server–level slow start

-

Protect applications on protected servers against traffic surges

-

Retrieve location details from user IP address using geolocation database

-

Use source IP address of the client when connecting to the server

-

Use client source IP address for backend communication in a v4-v6 load balancing configuration

-

Set a limit on number of requests per connection to the server

-

Configure automatic state transition based on percentage health of bound services

-

-

Use case 2: Configure rule based persistence based on a name-value pair in a TCP byte stream

-

Use case 3: Configure load balancing in direct server return mode

-

Use case 6: Configure load balancing in DSR mode for IPv6 networks by using the TOS field

-

Use case 7: Configure load balancing in DSR mode by using IP Over IP

-

Use case 10: Load balancing of intrusion detection system servers

-

Use case 11: Isolating network traffic using listen policies

-

Use case 12: Configure Citrix Virtual Desktops for load balancing

-

Use case 13: Configure Citrix Virtual Apps and Desktops for load balancing

-

Use case 14: ShareFile wizard for load balancing Citrix ShareFile

-

Use case 15: Configure layer 4 load balancing on the NetScaler appliance

-

-

-

-

Authentication and authorization for System Users

-

-

-

Configuring a CloudBridge Connector Tunnel between two Datacenters

-

Configuring CloudBridge Connector between Datacenter and AWS Cloud

-

Configuring a CloudBridge Connector Tunnel Between a Datacenter and Azure Cloud

-

Configuring CloudBridge Connector Tunnel between Datacenter and SoftLayer Enterprise Cloud

-

Configuring a CloudBridge Connector Tunnel Between a NetScaler Appliance and Cisco IOS Device

-

CloudBridge Connector Tunnel Diagnostics and Troubleshooting

This content has been machine translated dynamically.

Dieser Inhalt ist eine maschinelle Übersetzung, die dynamisch erstellt wurde. (Haftungsausschluss)

Cet article a été traduit automatiquement de manière dynamique. (Clause de non responsabilité)

Este artículo lo ha traducido una máquina de forma dinámica. (Aviso legal)

此内容已经过机器动态翻译。 放弃

このコンテンツは動的に機械翻訳されています。免責事項

이 콘텐츠는 동적으로 기계 번역되었습니다. 책임 부인

Este texto foi traduzido automaticamente. (Aviso legal)

Questo contenuto è stato tradotto dinamicamente con traduzione automatica.(Esclusione di responsabilità))

This article has been machine translated.

Dieser Artikel wurde maschinell übersetzt. (Haftungsausschluss)

Ce article a été traduit automatiquement. (Clause de non responsabilité)

Este artículo ha sido traducido automáticamente. (Aviso legal)

この記事は機械翻訳されています.免責事項

이 기사는 기계 번역되었습니다.책임 부인

Este artigo foi traduzido automaticamente.(Aviso legal)

这篇文章已经过机器翻译.放弃

Questo articolo è stato tradotto automaticamente.(Esclusione di responsabilità))

Translation failed!

Anycast support in NetScaler

Anycast is a type of network where a set of servers shares an IP address. The client request is directed to the topographically closest server based on their routing tables. This routing reduces latency issues, ensures high availability, and minimizes downtime.

NetScaler supports anycast network with Global Server Load Balancing (GSLB) and DNS features.

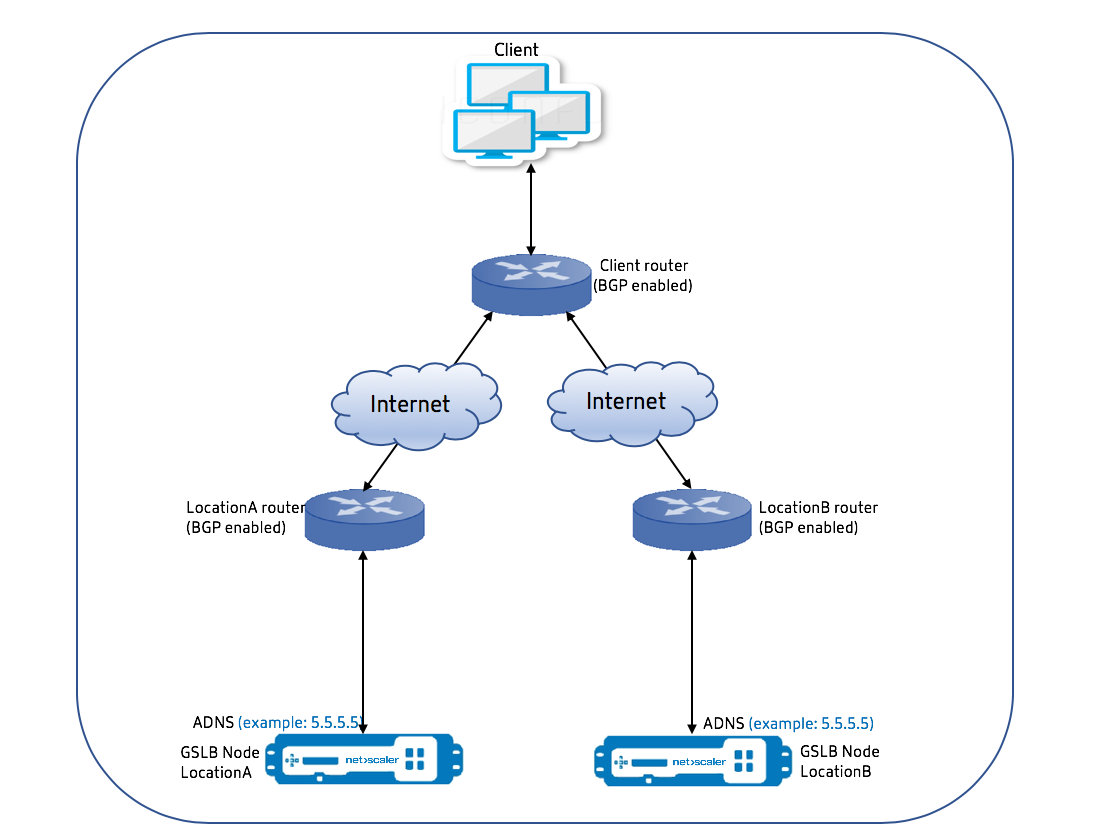

The following diagram illustrates a topology diagram of Anycast in NetScaler.

Anycast GSLB

The NetScaler GSLB feature provides load balancing across globally distributed sites along with disaster recovery and ensures continuous availability of applications.

During an outage, GSLB provides immediate disaster recovery by routing traffic to the closest or the best performing data center. However, GSLB cannot control the following:

- How the DNS traffic is routed to GSLB nodes in different geographical locations.

- How much latency is getting added while DNS queries get routed to GSLB nodes.

In a typical GSLB setup, each data center has a GSLB node configured with the site-specific Authoritative Domain Name Server (ADNS) to receive DNS queries. Each site’s ADNS is configured as the nameserver in the DNS resolver. As the number of GSLB nodes increases, the number of nameserver records also increase. In such cases, if there is a failure of a data center, LDNS has to retry resolution with a different nameserver. This retry adds to the latency in DNS resolution. Also, every time a GSLB node is added, the nameserver records must be updated.

To overcome these drawbacks, you can use Anycast ADNS. In Anycast ADNS, a single ADNS IP address is used for all GSLB nodes and the DNS traffic is routed to GSLB nodes using dynamic routing.

For example, if a GSLB site is DOWN, the routing table is updated and route to this site is removed. As a result, The DNS queries are not sent to the sites that are DOWN. As a result, there are no retries.

If a new GSLB node is added, the new node is assigned the same ADNS IP address. The dynamic routing automatically updates the routing tables with routes to new sites based on the routing algorithms. Hence, you do not have to update the DNS name server records. The rollout of new GSLB sites is made simpler and faster with Anycast.

How to configure an ADNS IP address in an anycast mode

Enable host routing on the ADNS IP in a NetScaler appliance, and set the appropriate Route Health Injection (RHI) level. Mostly, there would not be any virtual servers on the ADNS IP and therefore RHI level must be selected as NONE. Enabling host route on the ADNS IP makes it a kernel route. You can then enable the dynamic routing of choice and configure the routing protocol to redistribute the kernel routes.

ADNS IP configuration – Example

At the command prompt, type;

add service adns_public 5.5.5.5 ADNS 53

set ip 5.5.5.5 -hostRoute ENABLED -vserverRHILevel ALL_VSERVERS

<!--NeedCopy-->

BGP configuration in GSLB site – Example

Site1#sh run

!

hostname Site1

!

log syslog

log record-priority

!

ns route-install bgp

!

interface lo0

ip address 127.0.0.1/8

ipv6 address fe80::1/64

ipv6 address ::1/128

!

interface vlan0

ip address 10.102.148.94/25

ipv6 address fe80::e84c:f4ff:fe74:4588/64

!

interface vlan2

ip address 172.18.30.15/24

!

router bgp 5

redistribute kernel -----> redistributing the kernel routes

neighbor 172.18.30.30 remote-as 4

neighbor 172.18.30.30 advertisement-interval 1

neighbor 172.18.30.30 timers 4 16

!

End

Site1#

<!--NeedCopy-->

GSLB site routing table - Example

Site1#sh ip route

Codes: K - kernel, C - connected, S - static, R - RIP, B - BGP

O - OSPF, IA - OSPF inter area

N1 - OSPF NSSA external type 1, N2 - OSPF NSSA external type 2

E1 - OSPF external type 1, E2 - OSPF external type 2

i - IS-IS, L1 - IS-IS level-1, L2 - IS-IS level-2

ia - IS-IS inter area, I - Intranet

* - candidate default

K 5.5.5.5/32 via 0.0.0.0 ---------------------------------------> Kernel Route for ADNS

C 10.102.148.0/25 is directly connected, vlan0

C 127.0.0.0/8 is directly connected, lo0

B 172.18.10.0/24 [20/0] via 172.18.30.30, vlan2, 01w5d22h

B 172.18.20.0/24 [20/0] via 172.18.30.30, vlan2, 01w5d22h

C 172.18.30.0/24 is directly connected, vlan2

B 192.168.3.0/24 [20/0] via 172.18.30.30, vlan2, 01w5d22h

B 192.168.5.0/24 [20/0] via 172.18.30.30, vlan2, 01w5d22h

B 192.168.10.0/24 [20/0] via 172.18.30.30, vlan2, 01w5d22h

Gateway of last resort is not set

Site1#

<!--NeedCopy-->

Anycast DNS

You can use Anycast DNS for DNS proxy virtual servers on NetScaler. When there are multiple DNS name servers configured, the DNS resolver responds based on round robin method. For example, if the resolver does not receive any response from the first server, it switches to the second server after the configured timeout value expires. The switching from first server to second server adds to the latency in DNS resolution. If the DNS resolvers are configured with Anycast, then this latency can be eliminated.

DNS configuration – Example

At the command prompt, type;

add lb vserver dns DNS 5.5.5.50 53

set ip 5.5.5.50 -hostRoute ENABLED -vserverRHILevel ALL_VSERVERS

<!--NeedCopy-->

Share

Share

In this article

This Preview product documentation is Cloud Software Group Confidential.

You agree to hold this documentation confidential pursuant to the terms of your Cloud Software Group Beta/Tech Preview Agreement.

The development, release and timing of any features or functionality described in the Preview documentation remains at our sole discretion and are subject to change without notice or consultation.

The documentation is for informational purposes only and is not a commitment, promise or legal obligation to deliver any material, code or functionality and should not be relied upon in making Cloud Software Group product purchase decisions.

If you do not agree, select I DO NOT AGREE to exit.